Just Sunlight

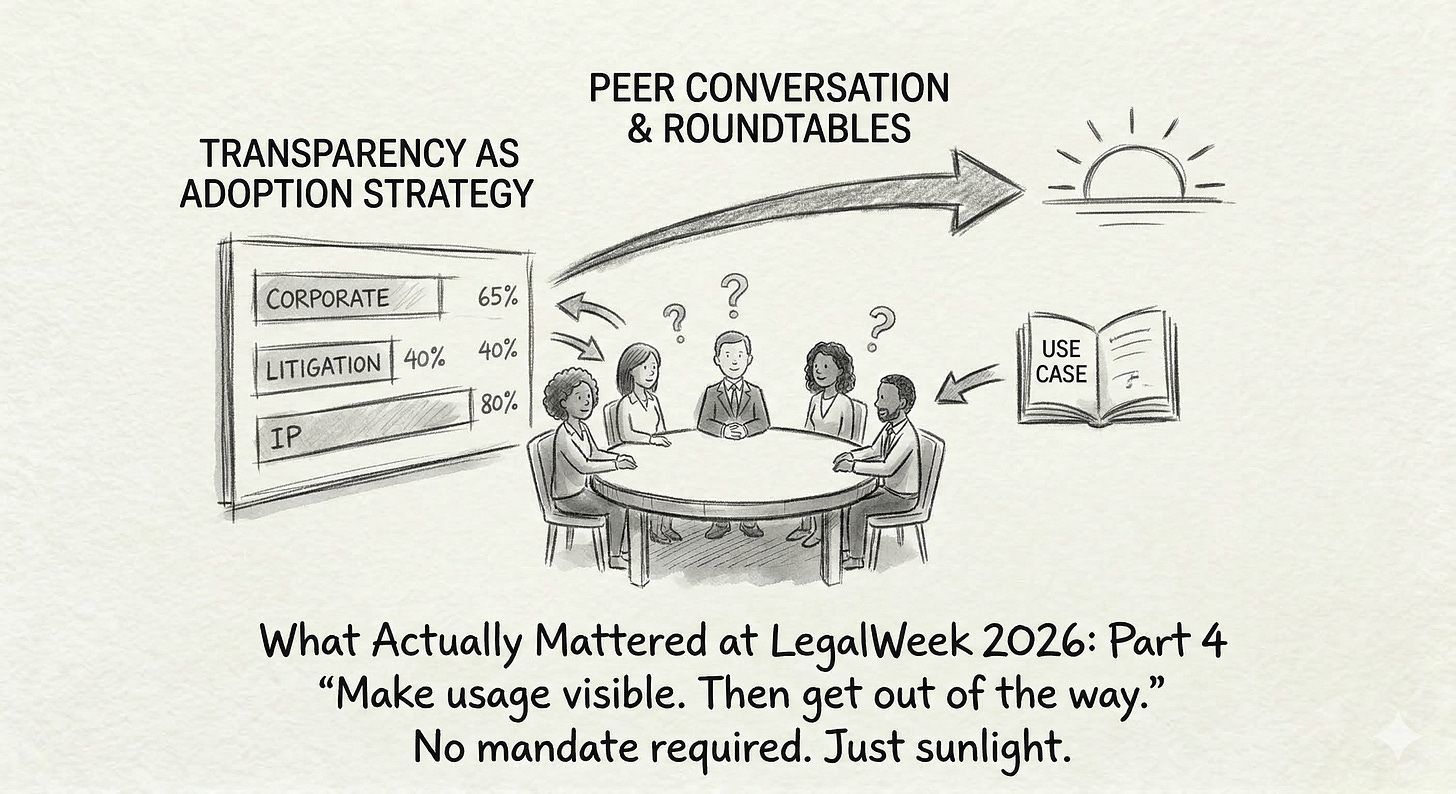

The adoption tactic nobody expected to work: making AI usage visible by practice group — and then stepping back.

Series: What Actually Mattered at LegalWeek 2026 — Part 4 of 6

There’s a persistent assumption in law firm leadership that AI adoption needs to be mandated. Pushed from the top. Measured by compliance. Enforced through policy.

LegalWeek offered a different model — one that’s simpler, cheaper, and apparently more effective.

Make usage visible. Then get out of the way.

Transparency as Adoption Strategy

Several firms described a version of the same tactic: they publish AI usage data — often Harvey adoption metrics, but sometimes broader tool engagement numbers — broken down by practice group.

That’s it. No mandate. No minimum usage requirement. No punitive measures for groups that lag behind. Just the data, visible to everyone.

What happens next is predictable if you’ve ever worked in a partnership. Competitive dynamics kick in. The corporate group sees that litigation is running at 40% adoption. The IP group sees that employment is outpacing them. Partners start asking their associates why the numbers look the way they do.

This creates three effects simultaneously: friendly competition, peer pressure, and organic knowledge sharing. The groups that are ahead start fielding questions from the groups that aren’t. The knowledge doesn’t flow through a central training programme — it flows through the firm’s natural social architecture.

I find this approach compelling because it respects how law firms actually work. Partnerships are peer-driven organisations. Top-down mandates create resistance. Visible data creates conversation. And conversation, in a partnership, is how things change.Practice Group Roundtables

The second tactic that came up repeatedly is even lower-ceremony: practice group AI roundtables.

The structure is minimal. You convene representatives from each practice group. Each group shares one real use case — something they’ve actually done with AI, not something theoretical. The group discusses what worked, what didn’t, and whether the use case is transferable.

That’s the whole format. No keynotes. No vendor demos. No slides. Just practitioners telling other practitioners what they tried and what happened.

The value here isn’t in any single use case. It’s in the normalisation. When a senior partner in real estate describes using AI to draft lease abstracts, that gives the cautious partner in tax permission to try something similar. Use cases are the social proof that no amount of training material can replicate.

I’ve been experimenting with a version of this in my own context, and the constraint that makes it work is the specificity requirement. “One real use case” forces people past the theoretical and into the concrete. It also keeps the sessions short, which means people actually show up.

What’s Actually Happening Here

Both of these tactics — usage transparency and roundtables — are doing something that top-down adoption programmes struggle with. They’re creating social conditions where using AI becomes the norm rather than the exception.

That’s a fundamentally different approach from the standard playbook. The standard playbook says: train people, give them access, and measure adoption. These tactics say: make the behaviour visible, create peer contexts where it’s discussed, and let the social dynamics of the firm do the rest.

It won’t work everywhere. It won’t work for every practice group. And it won’t replace the need for solid training, good tooling, and clear governance. But as a complement to those things — as the social layer that makes everything else stick — I haven’t seen anything more effective.

No mandate required. Just sunlight.

Next in this series: The hard conversations firms are avoiding — on talent, pricing, and the compensation question nobody wants to answer.

Andrew is a Director of AI and Innovation at a large Canadian law firm. He writes about what AI adoption actually looks like from inside the institution.