The Barriers to AI Adoption Are the Job

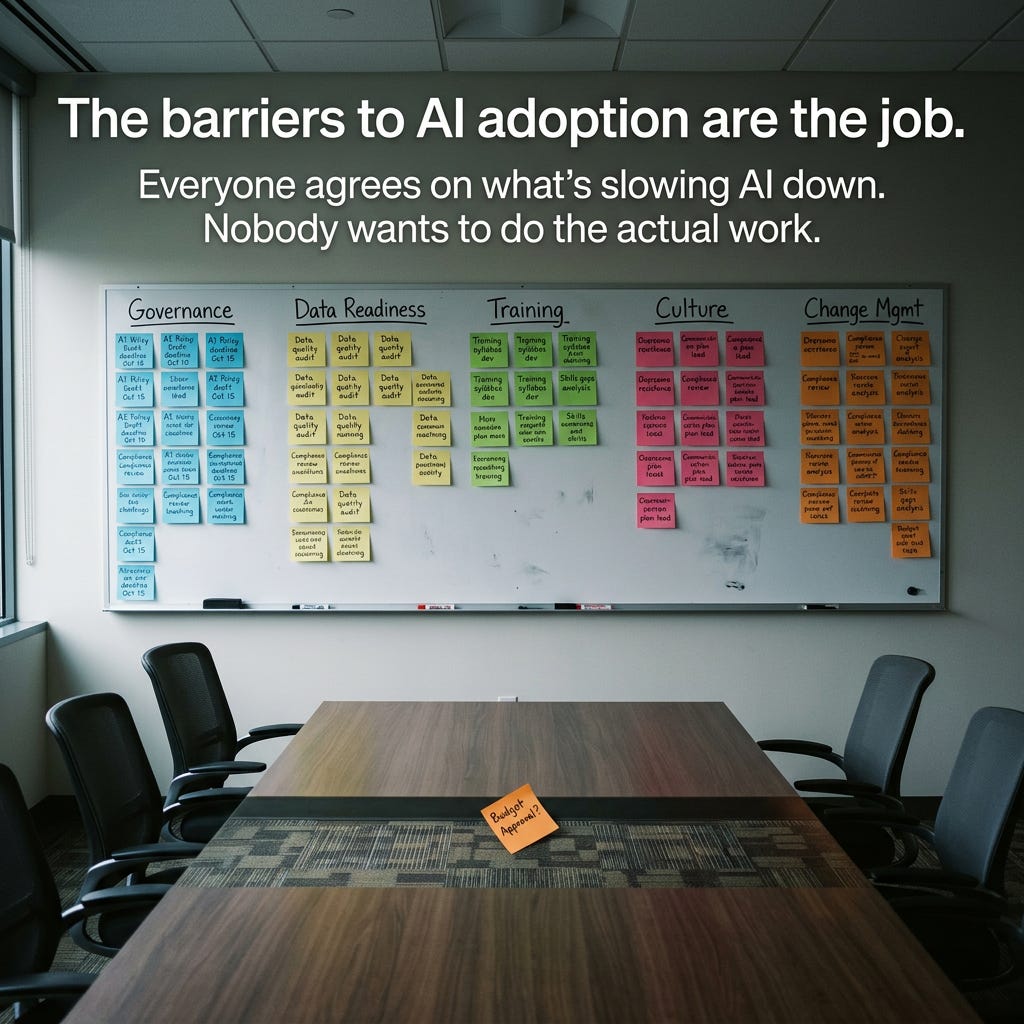

Everyone agrees on what’s slowing AI down. Nobody wants to do the actual work.

Every few weeks, a new report lands cataloguing the barriers to enterprise AI adoption. Poor data management. Incomplete governance. Lack of training. Unclear ROI. Resistance to change.

No Jitter published one this week. BCG has one. McKinsey’s latest State of AI survey says 88% of organizations report using AI in at least one business function — but nearly two-thirds haven’t begun scaling it across the enterprise.

An HBR piece from earlier this year put a finer point on it: AI initiatives stall not because the technology fails, but because employees’ anxiety about relevance, identity, and job security drives surface-level adoption without real commitment.

None of this is new. And that’s exactly the problem.

We’ve been listing the same barriers for two years. The list hasn’t changed. Which raises an uncomfortable question: if we know what the barriers are, why haven’t we removed them?

The Barrier List Is Actually a Job Description

I lead AI enablement and delivery at a large Canadian law firm. My charter covers generative AI governance, production infrastructure decisions, cross-functional technology guidance, and organizational design for autonomous delivery teams.

When I read reports listing the top barriers to AI adoption, I don’t see obstacles. I see my to-do list.

Data readiness? That’s the conversation with InfoSec about what categories of data we can and can’t share with AI tools — and under what conditions. It’s the ongoing discussion about zero data retention and data residency requirements that shapes every infrastructure decision we make. And these aren’t theoretical concerns. The U.S. CLOUD Act allows American law enforcement to demand data held by U.S.-based providers regardless of where that data is physically stored. A Canadian firm using a U.S.-hosted AI tool with client data on a Montreal server is still exposed. When a Microsoft executive told the French Senate under oath in June 2025 that he couldn’t guarantee European data would be safe from U.S. authorities, the same logic applies to every law firm using a U.S.-owned cloud AI service.

Governance gaps? That’s the policy work — figuring out what guardrails are necessary when you’re building internal infrastructure like MCP servers that connect AI tools to live systems. The answer changes as the tools evolve, which means the governance has to evolve with it.

The other barriers on the list — training, middle management alignment, cultural resistance — those show up too. Every organization doing this work recognizes them. The question isn’t whether they’re real. It’s whether anyone is resourced to actually address them.

The barrier list isn’t a research finding. It’s a scope of work.

The Real Reason the List Doesn’t Change

From the inside, the pattern is pretty clear: the barriers persist because removing them requires a kind of work that most organizations don’t want to fund, staff, or prioritize.

Governance work is slow, political, and unglamorous. Nobody gets promoted for writing an AI acceptable use policy. Nobody gets a conference keynote for building a data classification framework. These are infrastructure projects — essential, invisible, and easy to defer.

Training is continuous, not one-shot. You can’t run a lunch-and-learn in Q1 and call it done. AI tools change. Use cases evolve. New risks emerge. Training has to be ongoing, role-specific, and practical. That takes dedicated time from people who are already stretched.

Middle management is where adoption lives or dies. A Workera analysis from earlier this year noted that most adoption bottlenecks sit in the middle of the organizational chart — managers who struggle to set expectations for AI-assisted work, don’t know what “good” looks like, and avoid the topic because it raises uncomfortable questions about headcount and value.

And cultural resistance isn’t about employees being Luddites. The HBR research showed it’s really about identity — people worry that using AI makes them look replaceable, or that their expertise is being devalued. You can’t train your way out of that. It requires sustained, honest messaging about what AI actually changes and what it doesn’t.

Every one of these barriers is addressable. None of them is easy. And most of them require organizational commitment that goes well beyond the technology team.

Where the Work Actually Lives

When I look at the barrier list through the lens of what has to happen, the work sorts itself pretty naturally.

Some of it you can systematize. Governance templates, usage policies, risk assessment frameworks, data classification standards — these are repeatable artifacts. Build them once, adapt across the organization. AI can even help with its own adoption here. Use it to draft the policies, generate training materials, structure rollout plans.

Some of it requires people working alongside people. Helping managers evaluate AI-assisted work. Coaching teams on where AI fits their specific workflows. Having the uncomfortable conversations about what changes when a task that used to take four hours now takes forty minutes. You can support this with tools and structured conversations, but you can’t skip the human part.

And some of it you just have to do the slow way. Building trust. Shifting culture. Showing through consistent action that efficiency gains won’t quietly become headcount cuts. That the goal is better work, not cheaper workers. No technology accelerates this.

Most organizations want to spend their AI budget on the first kind. The actual work is mostly the second and third.

The Patience Problem

There’s an additional dynamic that the barrier reports don’t capture: the gap between executive expectations and organizational readiness.

Leadership sees the reports about AI productivity gains. They hear the vendor pitches. They attend the conferences. They come back wanting to know why the organization isn’t moving faster.

The honest answer is: because we’re doing the barrier removal work. And that work doesn’t produce demo-ready results on a quarterly cadence.

Governance frameworks aren’t impressive in a board presentation. Training programs don’t generate viral LinkedIn posts. The slow, steady work of building organizational readiness for AI is the least visible and most important investment a company can make right now.

The organizations that are furthest along in AI adoption aren’t the ones that skipped the barrier work. They’re the ones that started it earlier and funded it properly. They invested in the boring infrastructure while everyone else was running pilot programs.

What Would Actually Change Things

The next time one of these reports drops, resist the urge to nod along and move on.

Instead, ask: which of these barriers are we actively working to remove? Who owns each one? What resources are behind it? What does progress look like on a 90-day horizon?

If you can’t answer those questions, the barrier list isn’t research. It’s a mirror.

These barriers have been known for two years. They’ll still be known next year if the only response is acknowledging them in another planning deck.

Somebody has to do the work. In most organizations, that role either doesn’t exist yet or doesn’t have the authority to act.

That’s the barrier nobody puts on the list.

I’d be curious to hear which barrier your organization spends the most time acknowledging and the least time actually working on.