The hidden second clause in the AI productivity story

A new study suggests the trade most of us think we’re making — skill for speed — often delivers neither.

Anthropic just published a study that quietly inverts how we talk about AI productivity. They paid 52 professional developers to learn a new Python library in 35 minutes. Half got an AI assistant. Half didn’t. Then everyone took the same comprehension quiz, with no AI.

The AI group scored 17% lower. About two grade points. A real effect, not noise — Cohen’s d of 0.738 if you care about the statistics.

That part has been making the rounds. The part that hasn’t is the productivity finding sitting right next to it.

On average, the AI group wasn’t faster.

People stayed about the same total time on the task. They just shifted from coding to interacting with the assistant. Some of them spent six minutes composing a single query in a 35-minute task. Which means the trade most people assume they’re making — accept some skill loss in exchange for speed — often isn’t even on offer. They lost the skill. They didn’t get the speed.

The variance is the story

What makes this study different from the usual productivity-of-AI-tools paper is that the researchers didn’t just measure averages. They watched every screen recording. And they found that the average is hiding something more useful than it’s showing.

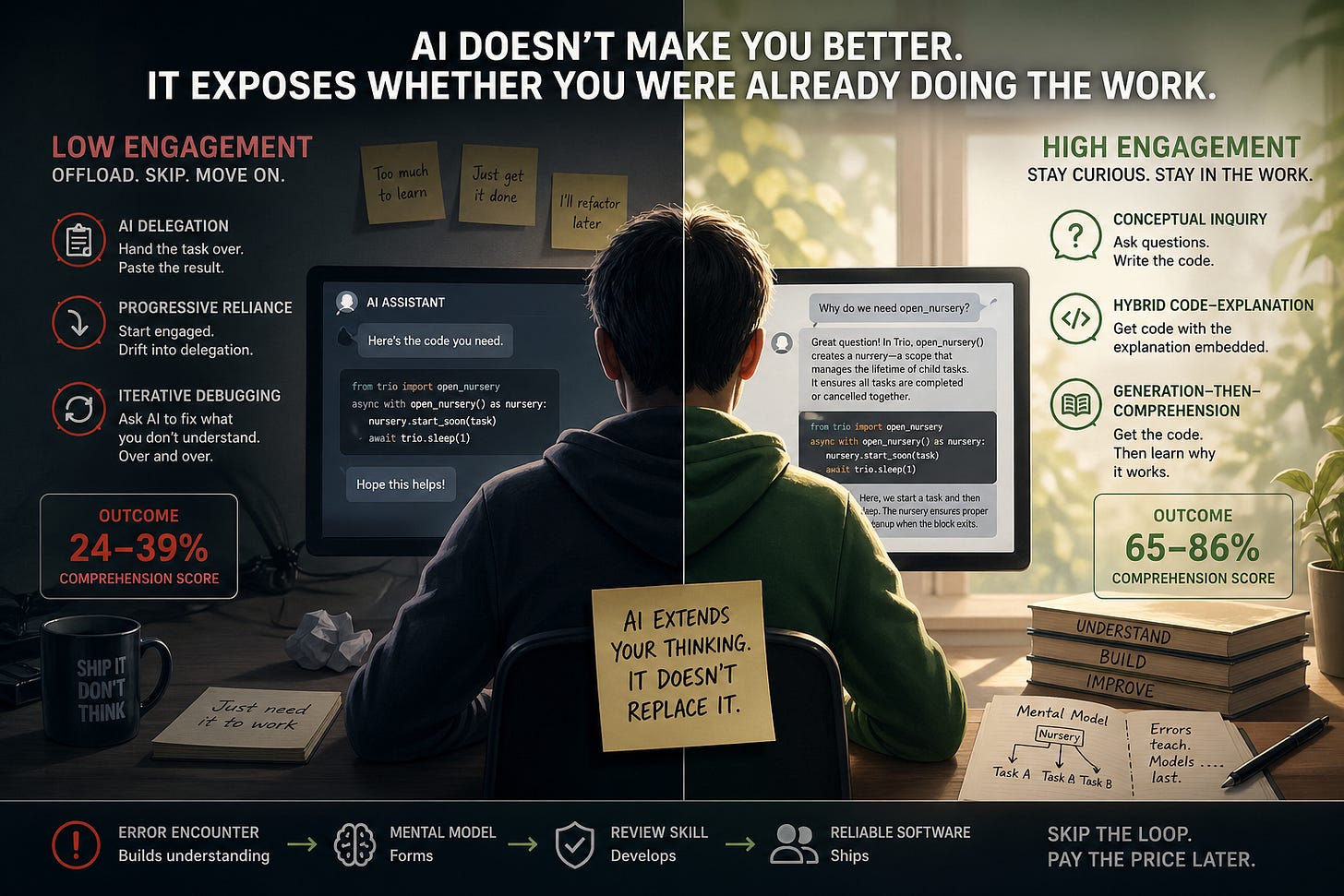

There were six distinct ways people used the AI assistant. The researchers split them by quiz outcome. Three patterns scored between 24 and 39 percent on the comprehension test. Three scored between 65 and 86.

The low-scoring patterns share a structure. AI Delegation — handing the whole task over and pasting the result. Progressive Reliance — starting engaged, drifting into delegation as the clock ticks. Iterative Debugging — asking the AI to fix things you don’t understand, over and over.

The high-scoring patterns share a different structure. Conceptual Inquiry — asking the AI questions, but writing the code yourself. Hybrid Code-Explanation — asking for code with the explanation embedded. Generation-Then-Comprehension — getting the code, then asking the AI to teach you why it worked.

You can guess what divides the two halves. Cognitive engagement. Not how much AI was used. Not which tool. Not how skilled the developer was going in. Whether they stayed in the work or stepped out of it.

The participant feedback in the qualitative section is the part that stuck with me. Several developers in the AI group volunteered, unprompted, that they had “felt lazy,” or wished they had paid more attention to the explanations the AI gave them, or noticed afterward that there were “still a lot of gaps in their understanding.” Cognitive offloading from the inside. They could feel it happening in the moment and didn’t stop it, because the task pressure was telling them to keep moving.

Why debugging is the canary

The biggest gap between the groups wasn’t on the conceptual questions. It was on debugging.

The no-AI group hit errors. Trio errors specifically — runtime warnings about coroutines that were never awaited, type errors from passing the wrong kind of object. These errors force you to learn how the library actually works, because you can’t skip past them without forming a mental model. The AI group skipped past most of them. Their code worked the first time, more often than not. They never built the debugging muscle, because they never had to.

This is the part that should make organizations nervous.

The dominant workflow proposal for AI-assisted software development is “AI writes the code, humans review it.” Sometimes packaged as “human in the loop.” It depends on a workforce that can read code well enough to catch what’s wrong with it. But if AI assistance during the formation period systematically removes the error-encounter loop that builds review skill, you’re producing a workforce structurally unable to do the review you’ve designed your safety story around.

That isn’t a future risk. It’s a workflow currently being deployed.

The hidden second clause

The dominant claim about AI productivity has been: AI makes you faster. The fine print, based on this evidence, is — only if you stay cognitively engaged. And the empirical pattern says most people don’t.

There’s a clean way to think about this. AI doesn’t make you better. It amplifies whether you were doing the thinking in the first place.

For people who already engage with their work — who ask why something is structured the way it is, who validate against a model of what good looks like, who treat tools as collaborators rather than dispensers — AI extends what they can do. For people who don’t, AI gives them faster ways to look productive while the underlying skill quietly erodes.

This isn’t a moral observation. It’s a structural one. The patterns that preserved learning in the study weren’t the patterns of people working harder. They were the patterns of people staying in a particular mode of attention.

What this changes

The implications break in three directions.

For individuals: how you use the tool matters more than which tool you use. Asking for code without asking for explanation is a learning shortcut that costs you compounding interest later. The patterns that work are slower in the moment and pay off in retention.

For teams: if you’re scaling junior contributions with AI, you need to deliberately build the engagement loops back in. Errors are not friction to eliminate. They’re where skill formation actually happens. Removing them feels like productivity. It produces fragility.

For organizations: the AI workflow you’re designing assumes a level of human capability you may be actively eroding through the same workflow. That’s not a contradiction you can prompt your way out of. It’s an architectural one.

The original framing — that AI is a productivity tool — was always slightly off. AI extends the cognitive work you’re already doing. Without that work underneath, the extension produces motion without progress.

AI doesn’t make you better. It exposes whether you were already doing the work.

Read the full Anthropic study: How AI Impacts Skill Formation by Judy Hanwen Shen and Alex Tamkin.

If this resonated, subscribe for more notes from inside a regulated industry trying to operationalize AI without breaking what makes it work.