The Oven Doesn't Make the Restaurant

LegalWeek showed that every firm now has AI tools. Almost none have changed how they work.

Series: What Actually Mattered at LegalWeek 2026 — Part 1 of 6

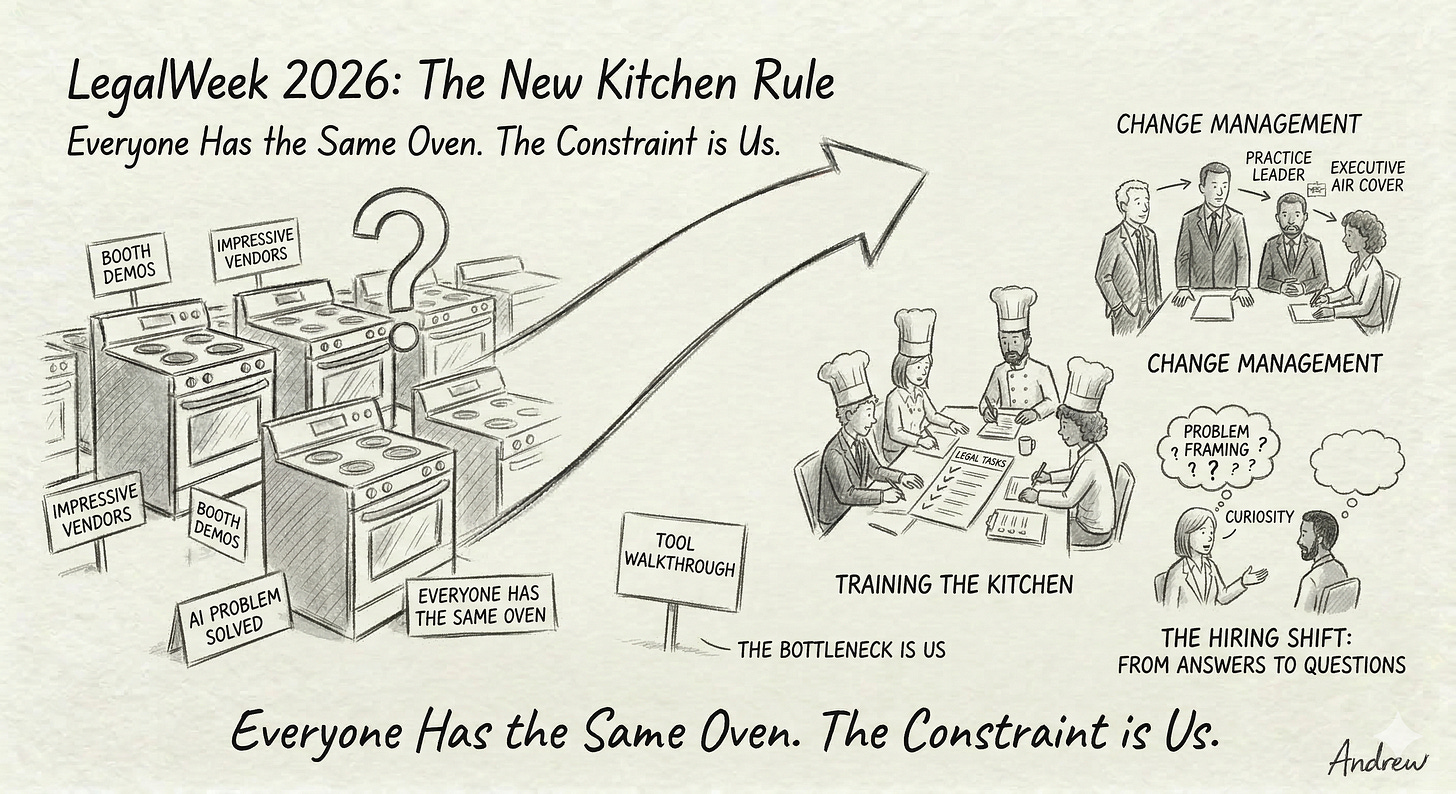

If you walked the expo floor at LegalWeek this year, you’d be forgiven for thinking the AI problem in legal is solved. Every booth had a demo. Every demo was impressive. Every vendor had a pitch about how their particular flavour of generative AI was going to reshape legal work.

And yet the most honest conversations — the ones in the hallways, the ones over coffee, the ones where people dropped the marketing voice — kept circling back to the same uncomfortable admission: the tools aren’t the bottleneck anymore.

The bottleneck is us.

Everyone Has the Same Oven Now

Here’s the analogy that kept surfacing in different forms across panels and roundtables: buying a better oven doesn’t make you a better restaurant. Training the kitchen does.

That lands differently depending on where you sit. If you’re a vendor, it’s a threat — because differentiation is collapsing. If you’re a firm leader, it’s a mirror — because the constraint on AI adoption isn’t the technology. It’s habits, trust, incentives, and whether the tool actually fits into the way people already work.

Firms that treat AI as a software rollout are stalling. I’ve watched this pattern from the inside. You announce a tool, run an onboarding webinar, send a few follow-up emails, and then wait for adoption numbers that never come. It feels like a deployment. It is not a deployment. It’s an organisational change problem — the kind where you need to rebuild workflows, shift expectations, and get very honest about what’s actually blocking people.

The firms that are moving aren’t the ones with the best tools. They’re the ones that treat adoption as a change management initiative with executive air cover, practice-group-level ownership, and a willingness to redesign process rather than bolt AI onto existing ones.The Hiring Shift Nobody’s Talking About Enough

There was a quieter theme running underneath the big panels that I think matters more than most of what made the main stage. Firms are changing how they evaluate talent — not just AI specialist talent, but lawyers.

The shift is from answers to questions.

The best hires, as several panellists put it, don’t claim mastery. They ask sharp, practical questions. They frame problems well. They spot risks that others walk past. They stay curious when the ground is uncertain.

This applies equally to the lawyer you’re hiring for your M&A team and the AI strategist you’re hiring for your innovation group. The signal isn’t “I know how to use Harvey” — it’s “I understand what this tool can’t do, and I know when to stop trusting it.”

I’m told interview rubrics at several firms are being rewritten to weight three things more heavily: problem framing, risk spotting, and curiosity under uncertainty. That’s a meaningful shift. It says something about where firms think AI is actually heading — not toward a world where lawyers know less, but toward a world where the ability to ask the right question becomes the scarce skill.“Here Are All the Buttons” Is Dead

The third theme that kept recurring is the death of feature-based training. I don’t think this one is controversial anymore, but it’s worth saying clearly: if your AI training programme starts with “Here’s the interface,” it’s failing.

The model that’s working — the one that came up in almost every adoption-focused session — starts with a specific legal task. Not a tool walkthrough. Not a prompt engineering workshop. A realistic, time-pressured legal task that mirrors actual work.

One session described it this way: the goal is not to teach a lawyer how to use Harvey. The goal is to teach a lawyer how to draft a first-pass asset purchase agreement under time pressure, using whatever tools are available — including Harvey, but not limited to it.

That reframing matters. When you train to the task, the tool becomes incidental. When you train to the tool, you’ve built a dependency that breaks every time the vendor ships an update.

I’ve been thinking about this a lot in my own work. The task-based model isn’t just better pedagogy — it’s better strategy. It forces you to identify the twenty or so repeatable legal tasks that actually drive your practice, and then build training, tooling, and measurement around those. Everything else is noise.

Next in this series: How knowledge teams are quietly becoming the most important function in the modern law firm — and why vendor onboarding is now a strategic bottleneck.

Andrew is a Director of AI and Innovation at a large Canadian law firm. He writes about what AI adoption actually looks like from inside the institution.