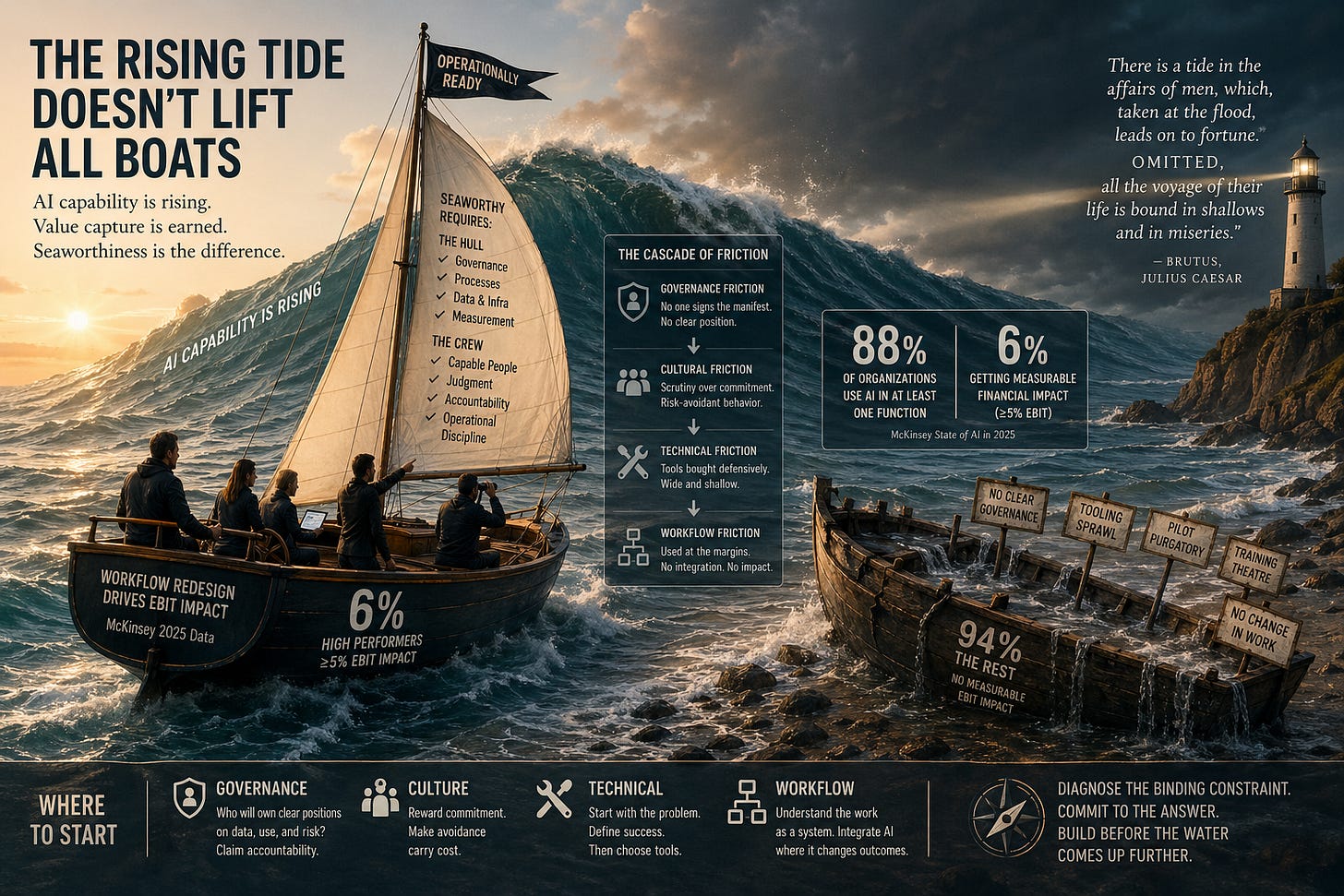

The rising tide doesn’t lift all boats

What McKinsey’s 2025 data actually says about AI value capture, why the playbook executives are following contradicts their own findings, and what to read instead.

Eighty-eight percent of organizations now use AI in at least one business function. Six percent are getting measurable financial impact from it.

Both numbers come from the same source: McKinsey’s State of AI in 2025, published last November and based on responses from 1,993 organizations across 105 countries. The first number is in the headline. The second is in the methodology, where “high performers” — defined as the 6% reporting at least 5% EBIT impact from AI use — are described as a small but meaningful cohort.

The gap between those two numbers is the entire story.

What you do with that gap depends on whose framing you accept. McKinsey’s framing is that the 6% are pulling ahead because they are committing more aggressively to the playbook: pursuing what the report calls transformative innovation, redesigning workflows, scaling faster, investing more. The implication for the other 94% is straightforward: do more of the same thing, harder.

That is the firm selling transformation engagements explaining why most transformation engagements aren’t working.

This essay is about why that framing is wrong, why the data inside the same report points at a different answer, and what executives should actually do with the gap. It draws on Shakespeare, Sutton, three years of operating AI inside a regulated industry, and McKinsey’s own contradictory evidence — and ends with a diagnostic question every executive can answer for themselves.

The half of the metaphor most people have stopped reading

Most executives carry around a half-version of an old metaphor: a rising tide lifts all boats. The implication is that benefit is automatic. Buy the licenses. Enable the seats. The water does the work.

There is a more honest version of the same metaphor in Shakespeare. Brutus, in Julius Caesar, says “there is a tide in the affairs of men, which, taken at the flood, leads on to fortune.” Most quotations stop there. The next line is the part that should keep executives up at night: “Omitted, all the voyage of their life is bound in shallows and in miseries.”

The tide doesn’t lift everyone. It lifts the ones who are ready to take it. The rest get stranded.

This isn’t a stylistic distinction. It’s a structural one. A boat with holes in the hull doesn’t rise with the water; it fills up and goes down faster. As the tide of AI capability rises — and it is rising, on a curve that doesn’t depend on anyone’s organizational readiness — the gap between seaworthy organizations and unseaworthy ones widens, not narrows.

Standing still is not neutral either. Competitor vessels are getting ready. The water is going up regardless of what any single executive does.

That is what the 88-vs-6 gap actually describes. Not a gradient of effort or commitment. A gradient of seaworthiness.

The vantage point matters here. Working inside a regulated industry sharpens this. When you cannot just deploy and learn — when data residency, regulator scrutiny, and partner trust constrain every decision — the gap between buying capability and capturing value becomes uncomfortably visible. You watch teams roll out impressive-looking pilots that produce no measurable change in how cases get worked or matters get billed. You watch licensing spend that nobody can defend in front of a finance committee. You watch the same governance committee revisit the same policy questions for the third quarter in a row because nobody wants their signature on the approval. Regulated industries don’t get to skip the operational work. The unregulated ones don’t either; they just discover it later, after the licensing spend is already sunk.

Why this looks like Sutton’s Bitter Lesson, and isn’t

Anyone watching the AI capability curve from a distance can recognize the trajectory. It tracks a pattern Richard Sutton named in 2019 in an essay called The Bitter Lesson. His argument was that across seventy years of AI research, general methods that scale with computation have eventually beaten approaches built on human knowledge of the domain. Chess. Go. Speech recognition. Computer vision. The pattern repeats: human-knowledge-based methods feel principled and produce early wins, then get overtaken by general methods plus more compute. Sutton’s claim has aged exceptionally well. Large language models are the most emphatic confirmation of his thesis since he wrote it.

Read carelessly, the Bitter Lesson is the case for executive optimism. The tide is rising because compute keeps growing. General methods keep absorbing what humans used to scaffold. The eventual end state is models that handle the integration work themselves. Just wait. Just deploy. The capability will arrive; the value will follow.

That reading is wrong, in a specific way.

Sutton was making a claim about model capability and training paradigms — what wins benchmarks at the research frontier. He was not making a claim about what creates value in deployed enterprise systems. The frontier of value creation in 2026 is not the model layer. It is the layer around the model: governance, retrieval, evaluation, workflow integration, accountability, measurement. Almost all of that is what Sutton called “human knowledge” — exactly the kind of scaffolding the Bitter Lesson predicts will eventually be absorbed. But it has not been absorbed yet, and won’t be for a while, because it is not benchmark-shaped. It is context-shaped, organization-shaped, accountability-shaped. There is no test set for “did the matter close faster” or “would the partner sign off on this advice.”

The honest synthesis is that Sutton’s lesson and the operational discipline argument live at different layers. Sutton describes the trajectory of model capability, which is rising reliably. The operational discipline argument describes what happens between that rising capability and the outcomes a board recognizes. That is exactly where the 94% are stuck. Both observations are true; they are not in competition. The executive who reads the Bitter Lesson as license to wait is making a category error, confusing what wins research benchmarks with what compounds in P&L.

What McKinsey’s report actually shows, and what it conveniently omits

Read the report carefully and a different argument emerges than the one in the executive summary.

McKinsey’s own correlation analysis tested twenty-five organizational attributes against EBIT impact from generative AI. The single biggest predictor was workflow redesign. Not licensing volume. Not tooling sophistication. Not training programs. Operational rewiring.

That finding is in their data. It is not in their headline recommendation. The headline recommendation is to pursue transformative innovation — a phrase that maps conveniently to a multi-year consulting engagement and not to anything an internal team could execute on its own. Their data identifies operational rewiring as the binding constraint. Their recommendation identifies engagement scope as the answer. Those are different things.

There is another revealing detail. The report identifies twelve “best practices for gen AI adoption and scaling.” Read them in sequence and notice what they describe: establishing a dedicated transformation office, creating a comprehensive change story, developing role-based capability training programs, defining a phased rollout roadmap, establishing employee incentives, mechanisms to incorporate feedback, fostering trust through structured communications. Each of these is a defensible activity. Together, they describe the typical scope of a transformation consulting engagement almost exactly. The list is not wrong. It is just suspiciously shaped.

The high-performer framing has its own problem: it is partly tautological. McKinsey defines high performers as the 6% getting EBIT impact, then observes that they redesign workflows and pursue transformation. That doesn’t establish causation. It is equally consistent with a different reading: organizations capable of producing real EBIT impact are also the ones with the institutional discipline to redesign workflows. The methodology and the outcome correlate. The report assumes the first causes the second.

There is also a quiet methodological choice worth surfacing. The EBIT impact figures are self-reported, by respondents who are typically the same executives sponsoring the AI initiatives in their organizations. There is no independent verification. People who have sponsored a major program and put their name on it are not neutral evaluators of whether the program worked. The 6% number may be generous; the actual share of organizations capturing real, board-defensible value from AI is probably lower.

This matters because the prescription most executives are following is built on these unexamined assumptions.

None of this is dishonest. Consulting firms make money on transformation programs. Their reports are not neutral observations of the AI landscape. They are demand generation for the engagements they sell. That is not a conspiracy theory. It is how the business model works. Executives know this about their own software vendors and somehow forget it about their consulting reports. The reports use the language of independent research and arrive accompanied by the prestige of the firm; both produce a halo that suspends ordinary critical reading.

The cleanest read of McKinsey’s own 2025 report is this: their data agrees that operational rewiring is the binding constraint on AI value capture. Their recommendation does not.

What seaworthy actually requires

A vessel that can take the tide needs two things, and you need both.

The first is the hull — operational readiness. Governance that can move at the speed of capability change. Procurement processes that start with a defined business problem rather than competitive anxiety. Data infrastructure that doesn’t break when the workload shifts. Measurement frameworks that distinguish adoption from value. These are the structural conditions that determine whether AI capability, once deployed, produces outcomes or evaporates into pilot purgatory.

The second is the crew — capable people. Practitioners who can see their own work structurally and identify where AI fits. People who can evaluate AI output with calibrated judgment rather than blind acceptance or reflexive rejection. Leaders who direct capability toward operational outcomes rather than activities.

Hull without crew is infrastructure waiting for capability nobody can direct. Crew without hull is capable individuals trapped in an unready organization. Most programs invest heavily in one and call it complete.

The version of this most executives miss is that both are operational disciplines. Hull readiness is obviously operational: governance, processes, infrastructure. But crew readiness is also operational. It is not produced by lunch-and-learns or tip sheets. It is produced by changing how work is decomposed, evaluated, and assigned. The training-program version of crew development is theatre. The work-redesign version is the actual thing.

What this looks like in practice is unglamorous. It looks like sitting with a team for an afternoon and watching them do their actual work, then identifying the four steps in their process that AI could change and the two steps it absolutely shouldn’t touch. It looks like building a measurement framework that distinguishes “we used the tool” from “the matter closed faster” or “the output was higher quality.” It looks like an executive willing to put their name on a governance position that will need to be defended six months from now when someone challenges it. None of these activities photograph well in a board deck. All of them produce more EBIT impact than another round of training rollouts.

The diagnostic question is which one is your binding constraint right now. A firm with strong governance and weak practitioner capability has a different next move than one with sophisticated practitioners trapped in a governance vacuum.

The cascade between friction points

The friction points reinforce each other in a cascade that is worth naming, because most executive interventions miss the dynamic and treat each as independent.

Governance friction comes first because it sets the conditions for everything that follows. When nobody will sign the manifest — when policy decisions get distributed until no individual is accountable — the organization cannot articulate what it is willing to do with AI. That ambiguity perpetuates cultural friction. In the absence of clear positions, the rational behavior is to scrutinize every proposed initiative for flaws rather than commit to one. Why would a manager stake their reputation on a course that has not been institutionally sanctioned?

Cultural friction in turn shapes technical friction. When the organization rewards skepticism and penalizes commitment, procurement defaults to broad, defensive purchases. Tools get bought because peers bought them. Licenses get distributed widely so no single deployment can fail visibly. The technical environment that emerges is wide and shallow rather than narrow and deep, optimized for political safety rather than operational impact.

Wide-and-shallow technical environments produce workflow friction inevitably. People have AI tools available but no specific operational problem the tools were procured to solve, no clear quality framework for output, and no model for what good integration looks like. They use the tools at the margins of their work — rewriting an email, summarizing a document — and never integrate them into the core processes that would actually produce measurable outcomes. The capable AI tools that do exist get used at a fraction of their potential because the users have not done the work of understanding their own workflow well enough to know where AI belongs.

The cascade is reinforcing, but it is not strictly linear. You can have workflow friction without governance friction. You can resolve technical friction while cultural friction persists. The point is that addressing one friction point in isolation often reveals how much the others have been compensating for it. Organizations that address only the technical layer — buying better tools — discover that the governance and cultural blockages were the real constraints. Organizations that try to address culture without resolving governance discover they are asking people to commit in an environment where commitment carries no institutional support.

Identifying which friction point is dominant is the executive’s actual job. Not all four. The one that is the binding constraint right now.

The asymmetry has inverted

For most of the last three years, executive avoidance was the rational position. Committing to a major AI program carried real personal risk: an underperforming initiative would carry the executive’s name. Avoiding carried no symmetric cost. Boards were not asking pointed questions. There was no peer benchmark to be measured against. The water was rising slowly enough that being late looked safe.

That has changed. Boards now ask comparative questions. Industry surveys publish utilization data. McKinsey’s own report turns the visibility up further — every executive whose firm sits in the 94% now has a number describing their position that their board has access to. The cost of being late has become measurable, and it is compounding quarter by quarter.

There is a second-order effect worth naming. The compounding gap between high performers and the rest is not just about EBIT impact. It is about the institutional learning that comes with operating AI seriously over time. Firms that have spent two years building governance muscle, measurement frameworks, and integrated workflows are not just ahead in adoption. They have built organizational capability that the late-arriving firm cannot replicate by deploying the same tools faster. The advantage is structural, not procurement-driven, and structural advantages compound on a different curve than catch-up effort can match.

The executive who commits to operational readiness now is making the lower-risk bet. The one waiting for clarity is making the bet that used to be safe and no longer is. Most have not repriced.

Where to start

The diagnostic question is straightforward: which friction point is your binding constraint right now?

If governance is the binding constraint, no investment in capability development will produce outcome. The starting move is identifying who is willing to own a clear position on data handling, acceptable use, and risk tolerance — and accepting that the position may need to be defended later. The friction does not dissolve when risk is eliminated. It dissolves when accountability is claimed.

If culture is the binding constraint, the work is changing the incentive structure so that commitment is rewarded and avoidance carries cost. This is the hardest of the four because it requires changing what the organization values. Most cultural interventions fail because they try to change rhetoric without changing reward; people read the actual signals.

If your binding constraint is technical — tools acquired because competitors acquired them, with no specific business problem they were procured to solve — the move is to start over with success criteria first. Identify the operational problem, define measurable success, then select technology against those criteria. The tools you have may or may not survive the analysis. Sunk cost is not a strategy.

If workflow is the binding constraint — your governance is reasonable, your culture is permissive, your tools are deployed, but nothing has changed in how work actually gets done — the gap is structural understanding of work itself. Practitioners need to see their own workflows as systems of operations rather than streams of tasks. That capability is not built by tip sheets. It is built by sitting with the work.

Most executives can answer the diagnostic question honestly if asked plainly. The hard part is not the diagnosis. It is committing to the answer.

The line worth remembering

Read McKinsey’s data. Don’t read McKinsey’s recommendation. The tide is rising, the playbook you are being sold will leave you stranded, and the boats that take the tide will be the ones whose hulls were built before the water came up.

There is still time to build. Not much.

The work I publish at AndrewLewisWasHere goes deeper on what AI operational readiness actually looks like inside a regulated industry, where you can’t just deploy and learn. Subscribe and the next piece will land in your inbox.