Your AI Adoption Number Is Lying to You

The metric everyone tracks, the one almost nobody does, and why the gap between them explains everything

Every enterprise AI dashboard in the world has an adoption number on it. Seats activated, prompts per user, tools deployed, percentage of workforce with access. The number goes up every quarter. The board is pleased. The CIO presents it with confidence.

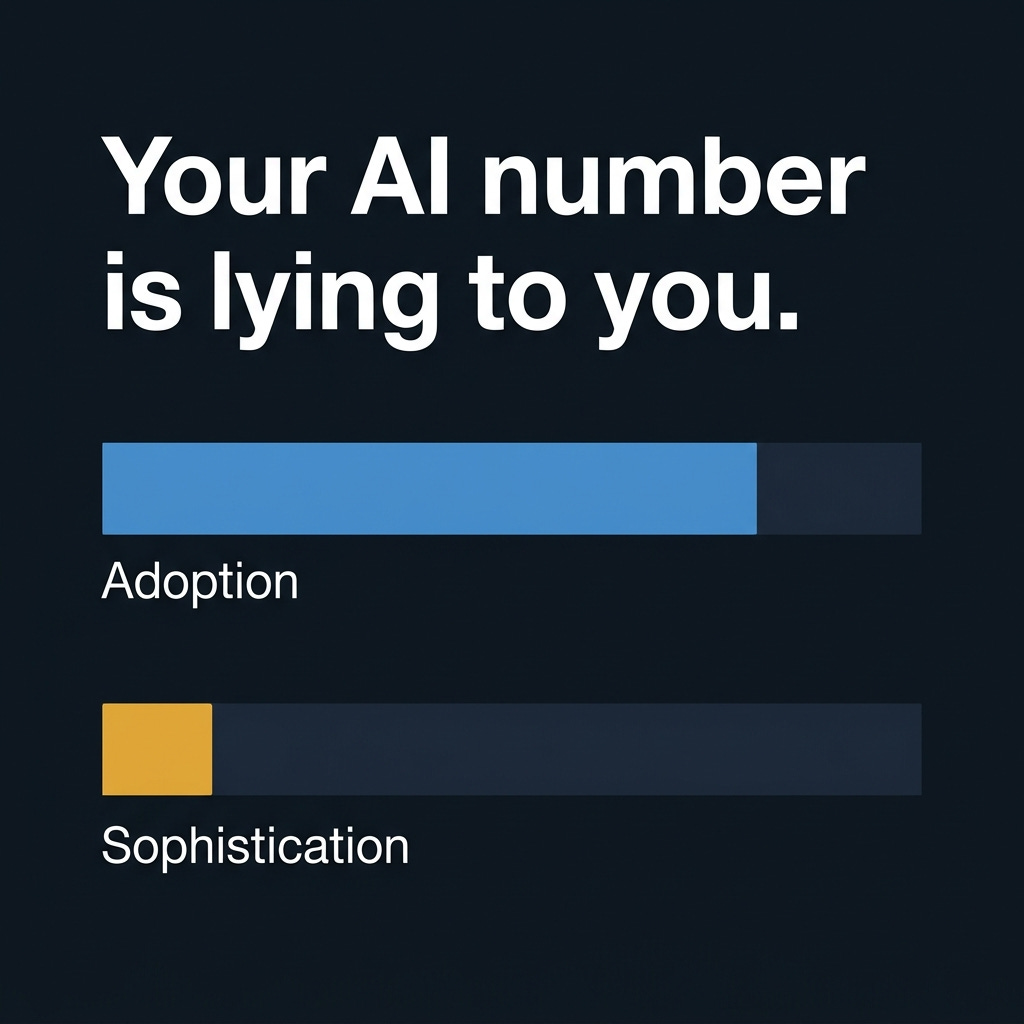

And almost none of it tells you whether AI is actually working.

The metric everyone loves is the metric that matters least

Adoption measures activity. Someone logged in. Someone typed a prompt. Someone opened Copilot and asked it to summarize an email they could have read in thirty seconds. All of that registers as adoption.

What it doesn’t measure is whether anyone’s work changed. Whether a contract review that used to take four hours now takes ninety minutes because the lawyer iterated through three rounds of AI-assisted markup. Whether the finance team built an automated reconciliation workflow or just asked ChatGPT to explain what a pivot table does. Whether the senior associate used AI to surface a pattern across two hundred documents that no one would have caught manually — or whether they used it to rewrite a slightly awkward email.

Those are fundamentally different behaviors. One is sophisticated use. The other is expensive autocomplete. And the adoption dashboard doesn’t distinguish between them.

The evidence arrived this week, from three directions at once

A Wharton School study tracking enterprise AI adoption over multiple years found a widening disconnect between executive enthusiasm and managerial reality. Nearly two-thirds of executives report becoming significantly more optimistic about AI over the past year. The managers implementing those same tools inside actual workflows report something closer to frustration. They see the constraints. They carry the operational burden. They don’t feel supported.

A separate survey of 2,400 knowledge workers found that 29% admit to actively undermining their company’s AI rollout. Among Gen Z workers, that figure reaches 44%. The tactics include feeding proprietary data into unapproved tools, refusing to complete AI training, and in some cases deliberately producing poor-quality output to make the tools look bad.

And a third study, from HFS Research, found that only 14% of enterprises have a clear AI strategy at all. The other 86% are deploying tools into a vacuum — no framework for what good use looks like, no feedback loop for what’s working, no definition of success beyond the adoption dashboard.

These aren’t three separate problems. They’re three symptoms of the same one: organizations measuring the wrong thing, at the wrong level, and mistaking activity for progress.

What sophistication actually measures

The Conversation Sophistication Score is a framework built on research from KPMG and the University of Texas at Austin. The study analyzed 1.4 million AI interactions across 2,500 employees and identified thirty behavioral characteristics that separate the highest-performing AI users from everyone else.

The finding that matters: sophistication isn’t correlated with frequency. The people using AI most often aren’t the ones using it best. The distinguishing behaviors are things like interaction depth (multi-turn conversations that build on previous outputs), task complexity (applying AI to genuinely difficult problems rather than simple lookups), iterative reasoning (treating AI output as a draft to be refined, not an answer to be accepted), breadth of application (using AI across multiple domains rather than one narrow use case), and fluency signals (adapting communication style and prompt structure to the specific task).

None of those show up on an adoption dashboard. You can have 95% adoption and 10% sophistication, and your metrics will tell you everything is going great until it very clearly isn’t.

The sequence that actually works

The enterprises getting AI right aren’t doing anything exotic. They’re solving the structural problems before chasing the visible ones. They define what good AI use looks like before measuring whether it exists. They build governance — not as a compliance exercise but as a shared understanding of what’s allowed, what’s encouraged, and what’s off-limits. They create conditions where experimentation is safe and failure is data, not career risk.

And then — after the structure exists — they start measuring sophistication alongside adoption. Not instead of it. Alongside it. Because adoption without sophistication is just expensive access. And sophistication without adoption means you have a few brilliant users surrounded by a workforce that’s opted out.

The 14% of organizations that have a clear strategy? They’re the ones building this foundation. The other 86% are wondering why their adoption numbers keep climbing and their results don’t.

The dashboard isn’t broken. The measurement is.

If you found this useful, consider sharing it with someone leading an AI rollout right now. They probably have an adoption number. They probably don’t have a sophistication score. That gap is the article.